Log File Analysis SEO: Optimizing Clinic Crawl for Patient Growth

Log file analysis seo provides direct insights into how Googlebot interacts with private clinic websites, revealing critical data on crawl frequency and requested URLs. This guide explains how to leverage server logs to optimize crawl budget, ensuring high-value medical content and E-E-A-T signals are efficiently indexed. Understanding HTTP status codes and user-agent strings helps diagnose indexability issues, improving search visibility for London’s competitive healthcare market. Effective log file analysis directly supports patient acquisition by ensuring essential clinic information is discoverable.

Abdurrahman Şimşek, a Semantic SEO Strategist with 10+ years of experience, specializes in building high-authority semantic content networks for medical clinics. His expertise ensures technical SEO strategies, including log file analysis, align with E-E-A-T principles for optimal search performance in the healthcare sector.

To explore your options, contact us to schedule your consultation.

This guide to log file analysis seo explains how search engines crawl and index medical content, helping clinics optimize crawl budget and improve visibility in London’s healthcare market. Server logs provide direct evidence of Googlebot’s behavior, ensuring high-value pages like surgeon profiles and procedure descriptions are crawled efficiently. This analysis diagnoses and resolves technical SEO issues that impact indexability and patient acquisition.

What is Log File Analysis and Why Does it Matter for Your Clinic’s SEO?

Log file analysis is the examination of server logs to understand how search engine bots like Googlebot interact with a website. This process provides direct, unfiltered data on bot visits, requested URLs, HTTP status codes, and crawl frequency. For private medical clinics, this data is critical for optimizing search visibility and ensuring content reaches potential patients in London’s healthcare market.

Decoding Googlebot’s Visits: The Basics of Server Logs

Server logs are text files generated by a web server, recording every request made to the website. Each entry includes the requester’s IP address, a timestamp, the requested URL, the HTTP status code, and the user-agent string. Googlebot’s activity is logged, providing a granular view of its crawling behavior. This data helps understand how search engines perceive a site’s structure and content.

The Critical Link Between Crawl Data and Patient Acquisition

Log analysis ensures that high-value pages—such as procedure descriptions, surgeon profiles, and patient testimonials—are crawled frequently and efficiently. This prevents search engines from wasting crawl budget on unimportant or broken pages, improving the indexability of critical content. Optimized crawling improves visibility, attracting prospective patients.

How Log Files Uncover ‘Cost of Retrieval’ Issues on Medical Websites

Log file analysis addresses ‘Cost of Retrieval’ (CoR), a metric for complex medical websites. CoR refers to the resources Google expends to crawl, process, and understand content. Inefficient crawling, revealed through server logs, increases this cost, potentially hindering the visibility of important medical information. By identifying and rectifying these inefficiencies, clinics can reduce their CoR and improve SEO performance.

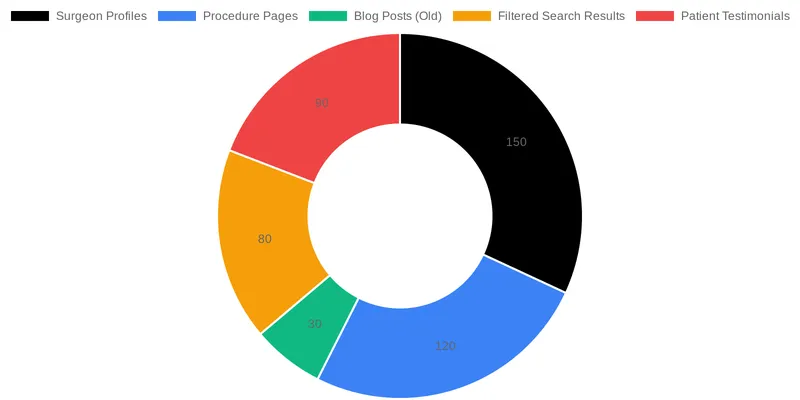

Identifying Wasted Crawl Budget on Low-Value Pages

Server logs reveal where Googlebot spends its time. They expose instances where the bot frequently visits irrelevant pages, such as old blog posts, filtered search results, or pages with broken links, instead of high-E-E-A-T medical content. This misallocation of resources is wasted crawl budget. Optimizing crawl budget through log file insights is a strategy for Optimizing ‘Cost of Retrieval’ for Complex Medical Websites, directing Google’s attention to pages that drive patient acquisition.

Prioritizing High-Value Content for Googlebot

Analysis of crawl patterns ensures pages vital for patient acquisition—like procedure descriptions, surgeon bios, and patient testimonials—receive adequate crawl frequency. When Googlebot consistently accesses these pages, it signals their importance, impacting their indexability and potential for higher rankings. This approach ensures valuable content is available to search engines and prospective patients.

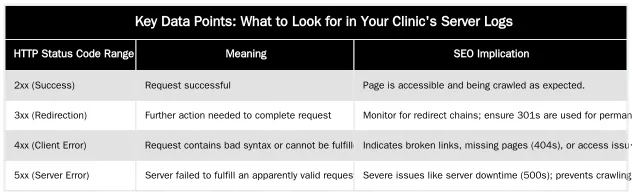

Key Data Points: What to Look for in Your Clinic’s Server Logs

Interpreting server logs requires understanding data points and their SEO implications. Focusing on HTTP status codes and user-agent strings shows how search engines interact with your site, allowing for targeted optimization.

Understanding HTTP Status Codes and Their SEO Impact

HTTP status codes are numerical responses from a server indicating a request’s outcome. Analyzing these codes in logs helps identify technical issues. 2xx codes (e.g., 200 OK) indicate successful requests. 3xx codes (e.g., 301 Moved Permanently) signify redirects, which need monitoring to prevent redirect chains. 4xx codes (e.g., 404 Not Found) point to client-side errors like broken links or missing pages that waste crawl budget and harm user experience. 5xx codes (e.g., 500 Internal Server Error) denote server-side issues that can impact indexability and site availability. A list of HTTP status codes can be found on Wikipedia.

Differentiating Googlebot User-Agents and Their Crawl Frequency

Google uses various user-agents to crawl websites, each with a specific purpose. For instance, Googlebot Desktop and Googlebot Smartphone crawl a site as a desktop and mobile user, respectively, providing insights into mobile-first indexing. Other user-agents include Googlebot Images, AdsBot, and Googlebot News. Tracking the activity of these user-agents helps understand how Google perceives and renders a site’s content. Analyzing crawl frequency for specific page types, such as new procedure pages or updated surgeon bios, confirms whether Googlebot is discovering and re-crawling important content at an optimal rate.

Leveraging Log File Insights for Strategic SEO Audits

Log file data is a component of a technical SEO audit, particularly for private clinics in competitive markets like London. Abdurrahman Şimşek, a London-based Semantic SEO Strategist, emphasizes integrating log analysis into an SEO strategy. This approach provides a deeper understanding of site health and performance, yielding actionable insights that drive patient acquisition.

Diagnosing Indexability and Crawl Frequency Issues

Log data provides direct evidence of Googlebot’s interaction, helping diagnose indexability and crawl frequency issues. Server log analysis can identify pages that are not crawled, crawled too infrequently to reflect updates, or crawled excessively despite low importance. These patterns can signal technical problems preventing medical content from ranking. Understanding these issues is crucial for diagnosing and fixing crawl budget issues, ensuring a clinic’s information is discoverable.

Integrating Log Data with Other Technical SEO Tools

Log file analysis complements data from other technical SEO tools. Google Search Console offers aggregated crawl statistics and index coverage reports, while tools like Screaming Frog provide site-wide crawl data. Cross-referencing log data with these tools and site analytics platforms gives SEO strategists a comprehensive view of how Google interacts with a website. This integrated approach allows for more accurate diagnosis of issues and the development of optimization strategies for London’s medical websites.

Beyond the Basics: Advanced Log Analysis for YMYL Sites

For Your Money Your Life (YMYL) medical websites, advanced log analysis is a strategic tool to reinforce E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals and maintain topical authority. This approach is a differentiator in Abdurrahman Şimşek’s semantic SEO methodology, ensuring trust factors are communicated to search engines.

Ensuring E-E-A-T Signals are Efficiently Crawled

E-E-A-T is paramount for medical content. Log files allow clinics to monitor crawl activity on pages with E-E-A-T signals, such as author bios, medical qualifications, and patient testimonials. Observing consistent Googlebot visits to these pages confirms that search engines are accessing and processing these trust-building elements. This verification is vital for demonstrating credibility and authority, as outlined in Google’s guidance on E-E-A-T.

Monitoring Crawl Patterns for New Medical Content Updates

Maintaining topical authority in a YMYL niche requires timely updates and accurate medical content. Log analysis helps verify that Googlebot discovers and re-crawls new or updated pages. For instance, if a clinic updates a procedure page with new research or adds a surgeon’s profile, monitoring server logs confirms that Googlebot is processing these changes. This discovery ensures the most current information is reflected in search results, reinforcing the clinic’s position as a trusted source.

Transforming Log Data into Tangible Clinic Growth

Log file analysis seo is a strategic imperative for private clinics. By understanding how search engines interact with a website, clinics can optimize crawl budget, improve indexability, and ensure medical content is visible to prospective patients. This data-driven approach translates to enhanced online visibility, increased organic traffic, and greater patient acquisition in London.

Conclusion

Log file analysis offers insight into the technical health and crawlability of a private clinic’s website. It provides direct evidence of Googlebot’s behavior, enabling adjustments to optimize crawl budget, enhance indexability, and ensure medical content is discovered and ranked. For clinics in London’s healthcare market, these insights are necessary for organic growth and patient acquisition. To transform log data into actionable strategies that drive clinic success, visit abdurrahmansimsek.com.

Frequently Asked Questions

Why is log file analysis SEO particularly important for a private clinic’s website?

For a private clinic, ensuring high-value pages like surgeon profiles and procedure descriptions are frequently crawled is paramount. This analysis provides definitive data on Googlebot’s priorities, revealing if crawl budget is being wasted on unimportant pages. Optimizing crawl efficiency directly impacts the visibility of pages that attract new patients in competitive markets like London.

What is the single most important insight gained from log file analysis SEO?

The most critical insight from log file analysis SEO is uncovering the discrepancy between what you *think* Google is crawling and what it’s *actually* crawling. This data exposes issues like orphan pages being ignored or faceted navigation creating crawl traps. Understanding this allows for precise, data-driven technical fixes that directly improve indexability and search performance.

How can log file analysis SEO help improve crawl budget for medical websites?

Log file analysis SEO helps identify pages Googlebot frequently visits that offer little SEO value, such as old internal search results or administrative pages. By identifying these “crawl traps,” clinics can implement directives like `noindex` or `disallow` in `robots.txt`. This redirects Googlebot’s attention to high-priority content like new service pages or doctor bios, optimizing the crawl budget for maximum impact.

Do I need special software to perform effective log file analysis?

While manual inspection of server logs is technically possible, it’s highly inefficient and impractical for most websites. Specialized tools like Screaming Frog Log File Analyser, Splunk, or custom Python scripts are essential. These tools parse large log files, aggregate data, and visualize Googlebot’s crawl behavior in a meaningful way, making the analysis actionable.

How do server logs assist with a website migration?

Server logs are crucial during a website migration to ensure a

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.