Site Architecture Crawl Budget: Optimizing Medical Site Visibility

Understanding site architecture crawl budget is crucial for surgeons aiming to dominate London’s private healthcare search results. This article explains how an optimized website structure ensures Googlebot efficiently discovers and indexes vital medical content, such as surgeon profiles and procedure details. Readers will learn to implement logical information hierarchies, internal linking strategies, and content silos to improve crawl efficiency and build topical authority. Proper site architecture minimizes wasted crawl budget on YMYL sites, enhancing visibility and trust for medical clinics.

Abdurrahman Şimşek, a Semantic SEO Strategist, specializes in building high-authority semantic content networks for medical clinics and plastic surgeons. His expertise in holistic SEO strategy and information retrieval ensures websites achieve optimal crawl efficiency and topical authority within competitive YMYL landscapes.

To explore your options, contact us to schedule your consultation.

For plastic surgeons and aesthetic clinics in London, a planned site architecture crawl budget is critical for how efficiently Google discovers and ranks medical content. An optimized website structure attracts high-value patients and establishes authority in the competitive London private healthcare market.

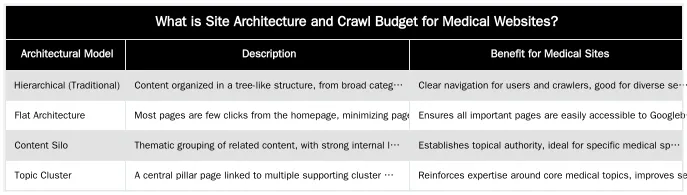

What is Site Architecture and Crawl Budget for Medical Websites?

Site architecture is the structural design of a website, organizing how pages are linked and accessed by users and search engine crawlers. Crawl budget is the number of pages Googlebot can and wants to crawl on a site within a given timeframe. For YMYL (Your Money Your Life) medical websites, an optimized structure ensures Google efficiently discovers and indexes critical information like surgeon profiles, procedure details, and patient safety guidelines, which is necessary for high organic visibility and trust.

Defining Site Architecture: The Blueprint of Your Medical Site

Site architecture encompasses the hierarchy of pages, navigation paths, and internal linking structure. A logical architecture helps prospective patients find information on procedures, conditions, or surgeons. For search engines, a clear structure helps Googlebot discover content, understand relationships between medical topics, and prioritize pages for indexing.

Understanding Crawl Budget: Googlebot’s Efficiency Metric

Googlebot has a limited capacity for crawling pages on a website in a specific period; this is the crawl budget. Efficient crawl budget usage is vital for large medical websites with many pages detailing surgical procedures, patient testimonials, and blog articles. If Googlebot wastes its budget on low-value or duplicate content, it may miss updated medical information, hindering indexation and search visibility.

How Does Site Architecture Influence Googlebot’s Crawl Efficiency?

A surgeon’s website structure directly impacts how Googlebot navigates and prioritizes content. A logical information hierarchy guides the crawler to important medical pages. A disorganized structure wastes crawl budget on unimportant pages or makes it difficult to find new content.

The Role of Internal Linking in Guiding Googlebot

Internal linking creates hyperlinks between pages on a website. An internal linking strategy distributes PageRank, signaling page importance to Googlebot. For a medical clinic, linking from a general “Plastic Surgery” page to specific procedure pages like “Rhinoplasty” or “Breast Augmentation” helps Google understand topical relationships and prioritize these service offerings. Orphaned pages (with few or no internal links) are less likely to be discovered and indexed by search engines. Effective internal linking is also crucial for automated internal linking guide strategies.

URL Structure and Page Depth: Minimizing Crawl Waste

A logical url structure is fundamental for crawl efficiency. Descriptive, hierarchical URLs (e.g., `/procedures/facial-surgery/rhinoplasty`) signal the content’s topic and position in the site structure to Googlebot. Minimizing page depth (the number of clicks from the homepage) also reduces crawl effort. Important medical procedure pages should be no more than three clicks from the homepage. Complex or dynamic URLs with many parameters can confuse crawlers and waste crawl budget, as Googlebot may struggle to identify unique content.

Optimizing Site Architecture: Best Practices for Surgeons’ Websites

Strategic architectural models and tools can enhance a surgeon’s online visibility by ensuring Googlebot efficiently discovers and indexes relevant medical content, improving search rankings and patient engagement.

Implementing Content Silos and Topic Clusters for Medical Specialties

Organizing content into content silo structure or topic clusters is an effective architectural strategy for medical websites. For example, a plastic surgeon might create a “Facial Surgery” silo with interlinked pages for rhinoplasty, facelift, and blepharoplasty, supported by related blog content. This grouping reinforces topical authority for medical specialties, helping Google understand the site’s expertise. It also directs crawl flow, ensuring Googlebot prioritizes these topic areas.

Leveraging XML Sitemaps and Breadcrumbs for Enhanced Crawlability

An XML sitemap is a roadmap for Googlebot, listing all important pages. It is crucial for ensuring new or updated medical content is discovered promptly. Regularly updating and submitting your xml sitemap helps Googlebot allocate its crawl budget effectively. Breadcrumbs (e.g., Home > Procedures > Facial Surgery > Rhinoplasty) are navigational links that improve user navigation and provide hierarchical signals to search engines. They help Googlebot understand the site’s structure and the context of each page.

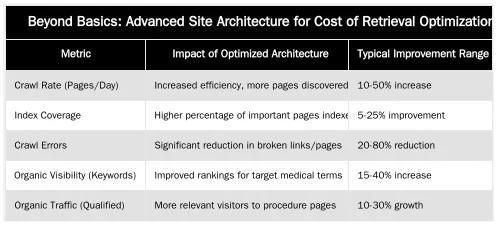

Beyond Basics: Advanced Site Architecture for Cost of Retrieval Optimization

London-based Semantic SEO Strategist Abdurrahman Şimşek states that semantic engineering principles can reduce Google’s processing cost, or ‘Cost of Retrieval’ (CoR). This improves rankings and visibility for high-value medical queries.

Semantic Site Architecture and Entity-Attribute-Value (EAV) Modeling

Semantic site architecture structures content around entities (e.g., “Rhinoplasty”) and their attributes (e.g., “recovery time,” “anesthesia type,” “ideal candidate”). This approach, using Entity-Attribute-Value (EAV) modeling, helps Google understand complex medical information more precisely. Defining these relationships reduces Google’s processing effort, optimizing ‘Cost of Retrieval’ for complex medical websites. This improves indexing accuracy and ranking for nuanced patient queries, which is critical for the London private healthcare market.

Managing Faceted Navigation and Pagination on Large Medical Portals

Large medical websites often use faceted navigation (e.g., filtering surgeons by specialty) and pagination (e.g., “Page 1 of 10” for archives). If not managed correctly, these features create excessive crawlable URLs, causing index bloat and wasted crawl budget. Strategies like using canonical tags, noindex directives for filtered results, and proper URL parameter handling are essential. This focuses Googlebot’s crawl budget on unique content and prevents indexing redundant pages. For further insights, explore strategies for reducing Cost of Retrieval.

Measuring the Impact: How Improved Architecture Boosts Medical SEO

Surgeons and clinic managers can monitor key metrics to assess site architecture changes. An optimized crawl budget provides SEO benefits that impact patient acquisition and clinic revenue.

Key Metrics: Crawl Stats, Index Coverage, and Organic Visibility

Google Search Console provides data for monitoring crawl activity. The Crawl Stats report details Googlebot visits, pages crawled, and crawl errors. A healthy crawl rate and fewer errors indicate efficient crawl budget utilization. Improved index coverage (more important medical pages indexed) results from better architecture. This leads to higher organic rankings for target keywords, like plastic surgery and aesthetic treatments in London.

Connecting Technical SEO to Patient Acquisition and Revenue

Optimized architecture leads to faster indexing of new or updated medical content. Faster indexation means the clinic appears sooner in search results for high-value queries. Increased visibility drives qualified organic traffic, increasing patient inquiries and bookings. This increases patient acquisition and revenue for private healthcare providers. An efficient site architecture crawl budget is foundational to this success.

Future-Proof Your Clinic: Partner with a Semantic SEO Expert

Site architecture is a strategic asset for SEO success in the London private healthcare market. Ensuring Google can efficiently crawl, understand, and rank medical content is paramount for attracting high-value patients. To leverage semantic engineering principles and future-proof your clinic’s online presence, partner with a specialist. Abdurrahman Şimşek offers semantic SEO and web development for medical clinics to build a high-authority online presence. Visit abdurrahmansimsek.com to learn more.

Frequently Asked Questions

What is the ideal site architecture crawl budget for a surgeon’s website?

The ideal site architecture crawl budget for a surgeon’s website is achieved through a logical, hierarchical structure, often called a ‘silo’ or ‘topic cluster’ model. This flat structure ensures that important procedure pages are typically no more than 3 clicks from the homepage, allowing Googlebot to easily discover and index your most valuable medical content. Such an approach optimizes the allocation of Google’s crawling resources.

How does poor internal linking negatively affect a surgeon’s crawl budget?

Poor internal linking creates orphan pages, which have no internal links pointing to them, and deep pages, which are many clicks from the homepage. This forces Googlebot to expend more effort navigating your site, wasting its allocated crawl budget on discovery rather than indexing your key services and authoritative medical information. A robust internal linking strategy is vital for efficient crawling.

Can the URL structure directly impact the site architecture crawl budget?

Yes, a clean, logical URL structure, such as /procedures/tummy-tuck/, provides clear signals to search engines about a page’s content and its place within the site hierarchy. Conversely, long, dynamic URLs with numerous parameters can confuse crawlers, leading to inefficient use of the site architecture crawl budget. Clear URLs contribute to better crawl efficiency.

What is ‘page depth’ and why is it critical for a medical website’s crawl efficiency?

Page depth refers to the number of clicks required to reach a specific page from the homepage. For medical sites, high-value procedure pages buried deep within the site (e.g., 5-6 clicks in) may be crawled less frequently by Google. Maintaining a shallow page depth for critical content is therefore crucial for ensuring timely discovery and visibility in search results.

Do breadcrumbs enhance site architecture crawl budget optimization?

Absolutely. Breadcrumbs provide clear, contextual internal links on every page, reinforcing the site’s hierarchical structure for both users and search engines. This helps Googlebot understand the relationships between pages and crawl the site more efficiently, directly contributing to better site architecture crawl budget management.

How can Abdurrahman Şimşek help optimize my clinic’s website for improved crawl budget?

Abdurrahman Şimşek specializes in building high-authority Semantic Content Networks for medical clinics, leveraging 10+ years of experience in holistic SEO strategy. By optimizing your site’s structure and technical elements, he ensures Googlebot efficiently discovers and ranks your most valuable medical content, attracting high-value patients in the competitive London private healthcare market. You can learn more or get started by visiting abdurrahmansimsek.com.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.