How to Use Log File Analysis: Optimize Googlebot Crawl Efficiency

To effectively manage search presence, use log file analysis to understand Googlebot’s interaction with your website. This technical SEO practice involves examining server logs to identify wasted crawl budget, where Googlebot spends resources on low-value or error pages. By analyzing user-agent data and HTTP status codes, strategists can optimize crawl paths, ensuring valuable content is efficiently discovered and indexed. This process is crucial for improving indexation, conserving server resources, and enhancing overall search visibility.

As a Semantic SEO Strategist, Abdurrahman Şimşek specializes in leveraging deep technical insights from crawl data to enhance website performance. This approach focuses on aligning Googlebot’s activity with your strategic content priorities, particularly for complex sites.

To explore your options, contact us to schedule your consultation. You can also reach us via: Book a Semantic SEO Audit, Direct WhatsApp Strategy Line: +90 506 206 86 86

To manage your website’s search presence, use log file analysis to understand how search engine bots interact with your content. This guide explains how to examine server logs to identify and eliminate wasted Googlebot crawl. Optimizing site efficiency, particularly for complex medical websites, improves indexation, conserves server resources, and enhances search visibility.

What is Log File Analysis for SEO and Why Does it Matter?

Log file analysis for SEO involves examining server access logs to understand how search engine bots, like Googlebot, interact with your website. It provides direct, unfiltered data on which URLs are visited, how often, and any errors encountered. The goal is to identify and eliminate “wasted crawl budget,” ensuring Googlebot efficiently discovers and indexes your most valuable content.

This process reveals discrepancies between your intended site structure and Googlebot’s actual crawl path. It is a technical SEO tool for diagnosing issues that hinder indexation or dilute page authority. Understanding crawl patterns is essential for maintaining search performance, especially for large or frequently updated websites.

Decoding Server Logs: The Googlebot’s Perspective

Server logs are plain text files that record every request made to your web server. Each entry includes the requester’s IP address, the date and time, the requested URL, the HTTP status code, and the user-agent. When Googlebot visits your site, its activity is logged, providing a record of its journey.

Analyzing these logs provides insight into how Googlebot perceives your site’s structure and content. This includes identifying pages it frequently revisits, those it ignores, and any technical barriers it encounters. This data informs decisions about crawl optimization and site health. For a deeper dive, read A Guide to Log File Analysis for Private Clinic Websites.

How to Use Log File Analysis to Identify Wasted Crawl Budget

To use log file analysis, access your server logs via your hosting provider’s control panel or FTP. Process these raw files to extract SEO insights. The objective is to find where Googlebot wastes crawl budget on low-value or inaccessible pages.

Indicators of wasted crawl include Googlebot repeatedly hitting 4xx or 5xx error pages, crawling URLs blocked by robots.txt but still linked internally, or spending time on paginated archives with little unique content. Identifying these patterns allows for interventions to redirect Googlebot’s attention to your important content.

Key Metrics and HTTP Status Codes to Monitor

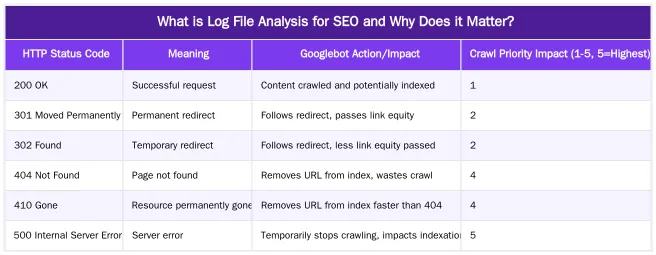

When analyzing server logs, key metrics provide insight into Googlebot’s behavior, including crawl frequency for specific URLs, bytes downloaded per page, and response times. HTTP status codes are crucial for identifying crawl issues.

Monitoring 200 (OK) responses indicates successful crawls, while 3xx (Redirection) codes show Googlebot following redirects. 4xx (Client Error) and 5xx (Server Error) codes signal problems that waste crawl budget and can prevent indexation. Pages returning 404 (Not Found) or 500 (Internal Server Error) tell Googlebot that content is unavailable, leading to wasted effort.

Tools for Effective Log File Analysis: Screaming Frog & Beyond

Manually analyzing raw log files is impractical. Specialized tools streamline the process. The Screaming Frog Log File Analyser lets you upload log files to visualize Googlebot’s activity, including crawl frequency, HTTP status codes, and user-agent data. This tool helps identify wasted crawl patterns and prioritize fixes.

Server-side analytics platforms or custom scripts (often in Python) can also parse and visualize log data, offering flexibility for larger datasets. Identifying the user-agent (e.g., Googlebot, Googlebot-Image) helps differentiate search engine activity from other bot or human traffic, focusing your optimization efforts.

Optimizing Crawl Efficiency for Complex Medical Websites

Complex medical websites, with extensive content, numerous procedure pages, and strict E-E-A-T requirements, challenge crawl optimization. Googlebot must navigate vast Your Money Your Life (YMYL) information, which requires expertise, authoritativeness, and trustworthiness. Log file analysis is critical here, ensuring Googlebot prioritizes medically accurate content over less important pages.

Inefficient crawling can cause critical medical information to be overlooked or slow to update in the index, impacting patient acquisition and online authority. Crawl optimization directs Googlebot’s resources to pages that build E-E-A-T and serve patient needs.

The Impact of Site Architecture on Googlebot’s Journey

Site architecture guides Googlebot through a medical website. Clear internal linking, logical content silos for medical specialties, and a shallow hierarchy make procedure pages, surgeon bios, and educational content discoverable. A flat or complex structure can confuse Googlebot, causing inefficient crawling and wasted budget.

Log analysis confirms if Googlebot follows your intended architecture. It reveals if critical pages are under-crawled due to poor internal linking or if Googlebot is lost in less important sections. Optimizing internal linking, especially for medical content hubs, improves crawl paths and ensures E-E-A-T signals are transmitted throughout the site.

Reducing Cost of Retrieval: A Strategic Imperative for Medical SEO

Reducing Google’s “Cost of Retrieval” (CoR) is critical for large medical websites. CoR is the resources Google expends to crawl, render, and index a website. Googlebot encountering an error, a low-value page, or a slow resource increases your site’s CoR. Log file analysis lowers CoR by identifying and eliminating these inefficiencies.

Ensuring Googlebot spends its crawl budget on high-E-E-A-T content instead of 404s, redirects, or thin pages signals efficiency and authority to Google. This can lead to more frequent crawling of important updates and improved indexation. Optimizing CoR is a core component of technical SEO for medical practices. Learn more about this concept at Reducing Cost of Retrieval: Technical SEO for Efficient Medical Website Performance.

Advanced Insights from a Semantic SEO Strategist

A semantic SEO approach to log file analysis extends beyond basic crawl statistics, integrating with an entity-driven framework. It focuses on how Googlebot perceives and prioritizes semantic relationships within content. This perspective is crucial for medical websites. Understanding Googlebot’s crawl patterns this way allows for optimizations that align with how search engines process information in 2026.

This analysis identifies if Googlebot is discovering and associating key medical entities (e.g., specific procedures, conditions, surgeon profiles) with their relevant attributes and topical clusters. This ensures E-E-A-T signals are communicated to search engines.

Beyond Basic Crawl Stats: Entity-Driven Log Analysis

Entity-driven log analysis uses crawl data to understand Googlebot’s focus on specific entities and topical clusters. It analyzes if Googlebot prioritizes pages related to core medical entities and their attributes. For instance, is Googlebot frequently crawling pages about “Rhinoplasty” and its related sub-topics like “recovery time” or “types of anesthesia”?

This approach guides content and technical optimizations, ensuring pages central to your semantic network receive adequate crawl attention. If log data shows under-crawling of critical entity pages, it indicates a need to strengthen internal linking or improve site architecture around those topics. This aligns with Google’s shift towards understanding entities over keywords. For more on Googlebot’s crawling behavior, refer to Google Search Central’s documentation on crawling and indexing.

Leveraging Log Data for Proactive Technical SEO & Algorithm Preparedness

Continuous log analysis is an early warning system for technical issues. Spikes in 4xx or 5xx errors, drops in crawl activity for important sections, or changes in user-agent behavior can signal problems before they impact rankings. This proactive monitoring allows for intervention, preventing minor issues from escalating.

Observing shifts in Googlebot’s crawling patterns over time can provide insight into potential algorithmic shifts. This allows for adjustments to site structure, content emphasis, or technical configurations to prepare for future algorithm updates. This foresight helps maintain long-term search visibility and authority.

What Results Can You Expect from Crawl Optimization?

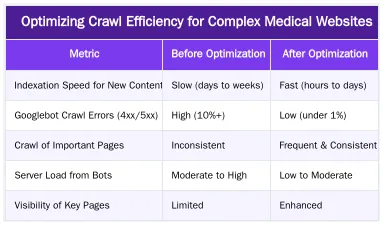

Crawl optimization, driven by log file analysis, yields SEO benefits. The outcome is a more efficient allocation of Googlebot’s crawl budget, meaning search engines spend more time on important content and less on errors or low-value pages. This improves indexation and ranking potential.

For medical websites that frequently add content like new research, procedures, or surgeon profiles, faster indexation is crucial. It ensures up-to-date, authoritative information reaches patients quickly. Optimized crawling also reduces server load, improving site performance and stability, especially for high-traffic platforms.

Improved Indexation, Ranking Potential, and Server Resource Management

When Googlebot crawls your site more efficiently, new and updated content is discovered and indexed faster. This means your latest medical articles, procedure updates, or patient testimonials can appear in search results sooner, capturing patient queries. Eliminating wasted crawl on error pages or duplicate content focuses Googlebot’s attention on pages that build topical authority and E-E-A-T, impacting their ranking potential.

Reducing unnecessary crawls on less important pages lowers server demand. This improves site speed and responsiveness, which are factors for user experience and Core Web Vitals. For a guide on addressing these issues, refer to how to fix crawl budget issues. The table below illustrates the impact of crawl optimization:

Ready to Optimize Your Clinic’s Crawl Budget?

Crawl budget management is a strategic investment in your medical clinic’s online visibility and authority. Understanding Googlebot’s behavior through log file analysis leads to SEO improvements, from faster indexation to enhanced ranking potential. If your medical website struggles with crawl efficiency or you want to implement a semantic SEO strategy, expert guidance can help.

Book a Semantic SEO Audit or connect via our Direct WhatsApp Strategy Line: +90 506 206 86 86 to discuss how technical and semantic SEO can improve your clinic’s digital performance. Visit contact us for more information.

Conclusion

Log file analysis is a critical skill for SEO professionals, particularly for complex medical websites. It provides a view into Googlebot’s interactions, revealing insights into crawl patterns, errors, and wasted budget. This data allows you to implement technical optimizations that reduce Google’s Cost of Retrieval, improve indexation, and strengthen search performance. For medical clinics, integrating log analysis into a semantic SEO strategy is necessary for sustainable organic growth. Book a Semantic SEO Audit or reach out via Direct WhatsApp Strategy Line: +90 506 206 86 86.

Frequently Asked Questions

What is the primary goal when you use log file analysis for SEO?

The primary goal when you use log file analysis is to gain an unfiltered view of how search engine crawlers interact with your site. This direct data reveals which URLs Googlebot visits, how frequently, and any errors it encounters, enabling you to identify and eliminate wasted crawl activity. It helps you see your website exactly as a search engine crawler sees it.

What’s the most valuable insight you can gain from log files for a clinic?

For a clinic, the most valuable insight is discovering if Googlebot is spending significant crawl budget on non-essential pages like old PDFs, thank-you pages, or URLs with tracking parameters. Redirecting this wasted effort towards your core procedure and doctor profile pages is crucial for improving their indexation and visibility. This optimization ensures your most important content is prioritized.

How do I access my website’s log files?

Log files are typically accessible through your web hosting control panel, such as cPanel or Plesk, or by requesting them directly from your hosting provider. You will usually need FTP or SSH access to download these files for subsequent analysis. Once downloaded, specialized tools can help parse and visualize the data.

Is log file analysis a one-time task?

No, log file analysis should be a recurring process, especially for dynamic or large websites. Performing this analysis quarterly helps you monitor Googlebot’s evolving behavior, proactively address new crawl issues, and verify the effectiveness of your ongoing SEO optimizations. It’s a continuous effort to maintain optimal crawl efficiency.

Can I use log file analysis to find pages that are never crawled?

Yes, a key benefit is the ability to identify “orphan pages” that Googlebot isn’t discovering or crawling. By cross-referencing your sitemap’s important URLs with the crawl data obtained when you use log file analysis, you can pinpoint content that needs better internal linking or submission. This ensures all valuable content has a chance to be indexed.

How does use log file analysis help optimize crawl efficiency?

When you use log file analysis, you gain direct insights into Googlebot’s crawl patterns, allowing you to pinpoint inefficient crawling. This data helps you identify pages that are unnecessarily consuming crawl budget, such as low-value content or broken links, so you can implement targeted optimizations like redirects, noindex tags, or improved internal linking. Ultimately, it ensures Googlebot focuses on your most important content.

How can I get expert help with log file analysis and crawl optimization for my clinic?

To ensure your clinic’s website is crawled efficiently and effectively, consider booking a Semantic SEO Audit with Abdurrahman Şimşek. With over 10 years of experience, he specializes in optimizing crawl budget and improving search visibility for medical and plastic surgery clinics. You can also reach out directly via the WhatsApp Strategy Line at +90 506 206 86 86 for immediate assistance.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.