Optimizing Crawl Budget: Maximizing Indexation for Large Sites

Optimizing crawl budget is crucial for large SaaS and e-commerce websites to enhance indexation speed and organic visibility. This guide provides a data-driven, proactive technical SEO workflow for optimizing crawl budget, ensuring Googlebot efficiently discovers and indexes critical content. Readers will learn to manage crawl efficiency, prevent indexation issues, and maximize organic potential by understanding factors like robots.txt, sitemaps, and server logs. Effective optimizing crawl budget prevents wasted resources on low-value URLs, focusing Googlebot on high-priority pages.

AbdurrahmanSimsek.com provides expert technical SEO insights, specializing in strategies for large-scale websites. Our content prioritizes accuracy, data-driven approaches, and actionable workflows to deliver tangible results. We are committed to ethical practices and maximizing client success in complex digital environments.

To explore your options, contact us to schedule your consultation.

Optimizing crawl budget is a paramount technical SEO discipline for large SaaS and e-commerce websites in 2026, directly impacting indexation speed and organic visibility. When Googlebot wastes time on low-value URLs, your most important pages—product releases, feature updates, and core landing pages—can be overlooked. This comprehensive guide provides a data-driven, proactive workflow to ensure search engines efficiently discover and index your critical content, maximizing your site’s organic potential and preventing costly indexation issues.

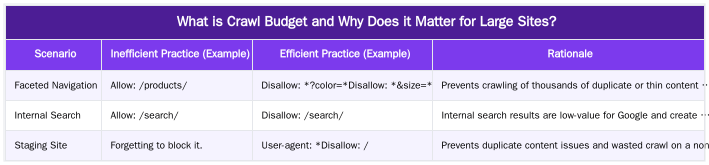

What is Crawl Budget and Why Does it Matter for Large Sites?

Crawl budget is the number of URLs Googlebot can and wants to crawl on your website within a specific timeframe. It is not a single metric but a combination of two key concepts: crawl rate limit, which prevents Google from overwhelming your server, and crawl demand, which is how important and fresh Google perceives your content to be. For small websites, crawl budget is rarely a concern.

However, for large SaaS platforms with thousands of documentation pages or e-commerce sites with millions of product SKUs and filtered variations, it is a critical resource. An unmanaged site structure can generate a near-infinite number of low-value URLs (e.g., from faceted navigation or internal search results). When Googlebot spends its limited resources crawling these dead ends, it fails to discover and index new product pages, updated blog posts, or critical feature announcements. This leads to significant delays in indexation, wasted server resources, and ultimately, lost organic traffic and revenue. Efficiently managing and optimizing crawl budget is non-negotiable for enterprise-level success.

The Core Factors Influencing Your Crawl Budget

To effectively manage your crawl budget, you must understand the signals that influence Google’s crawling behavior. As an expert in SaaS technical SEO, I’ve seen firsthand how these factors dictate whether a site’s key pages are discovered quickly or left languishing. The two primary components, as outlined by sources like Google Search Central, are crawl rate and crawl demand.

Crawl Rate Limit

This is the technical ceiling on how fast Googlebot can crawl your site without degrading user experience. A healthy, fast server signals to Google that it can crawl more aggressively.

- Server Health & Response Time: If your server responds slowly or returns frequent errors (5xx server errors), Googlebot will slow down its crawl rate to avoid causing further issues. A low Time to First Byte (TTFB) is a crucial indicator of a healthy server.

- Core Web Vitals (CWV): While primarily a ranking factor, strong CWV scores often correlate with a robust infrastructure. A site that loads quickly for users also tends to load quickly for Googlebot, allowing for more efficient crawling per session.

Crawl Demand

This is how much Google *wants* to crawl your site. Higher demand means Google will allocate more resources to keep its index of your site fresh.

- Popularity: URLs that have more high-quality backlinks and strong internal links are perceived as more important and are crawled more frequently.

- Freshness: Content that is updated regularly signals to Google that it should be re-crawled to capture the latest changes. This is vital for news sections, blogs, and frequently updated product pages.

- Staleness: Conversely, large sections of a site with unchanging content may see a decrease in crawl demand over time.

The core challenge in optimizing crawl budget is to align these factors, ensuring your server is healthy enough to handle a high crawl rate and that you are clearly signaling which pages have the highest crawl demand.

A Proactive Workflow for Optimizing Crawl Budget

Reactive fixes are not enough. For large websites in 2026, a proactive and systematic approach to optimizing crawl budget is essential. This workflow focuses on guiding Googlebot to your most valuable content while actively preventing it from wasting resources on unimportant pages.

Step 1: Master Your `robots.txt` File

Your `robots.txt` is the first line of defense. Use it to block access to any section of your site that provides no SEO value. This includes faceted navigation parameters, internal search result pages, staging environments, and thank-you pages. A clean `robots.txt` immediately improves crawl efficiency.

Step 2: Curate Clean and Dynamic XML Sitemaps

Your XML sitemap should be a pristine list of your most important, indexable URLs. It should never contain non-canonical URLs, redirected pages, or pages that return a non-200 status code. For large sites, split sitemaps into smaller, manageable files (e.g., by category or content type) and use a sitemap index file. This helps you diagnose issues more easily and ensures Google can process them efficiently.

Step 3: Reinforce Your Internal Linking Architecture

Internal links are the primary way Google discovers new pages and understands site structure. A strong content silo architecture funnels authority and crawl priority to your most important pages. Regularly audit your site for broken links (404s) and long redirect chains (e.g., A -> B -> C), as both waste crawl budget. Pages with more internal links are deemed more important and will be crawled more often. This is a key part of any strategy for optimizing crawl budget.

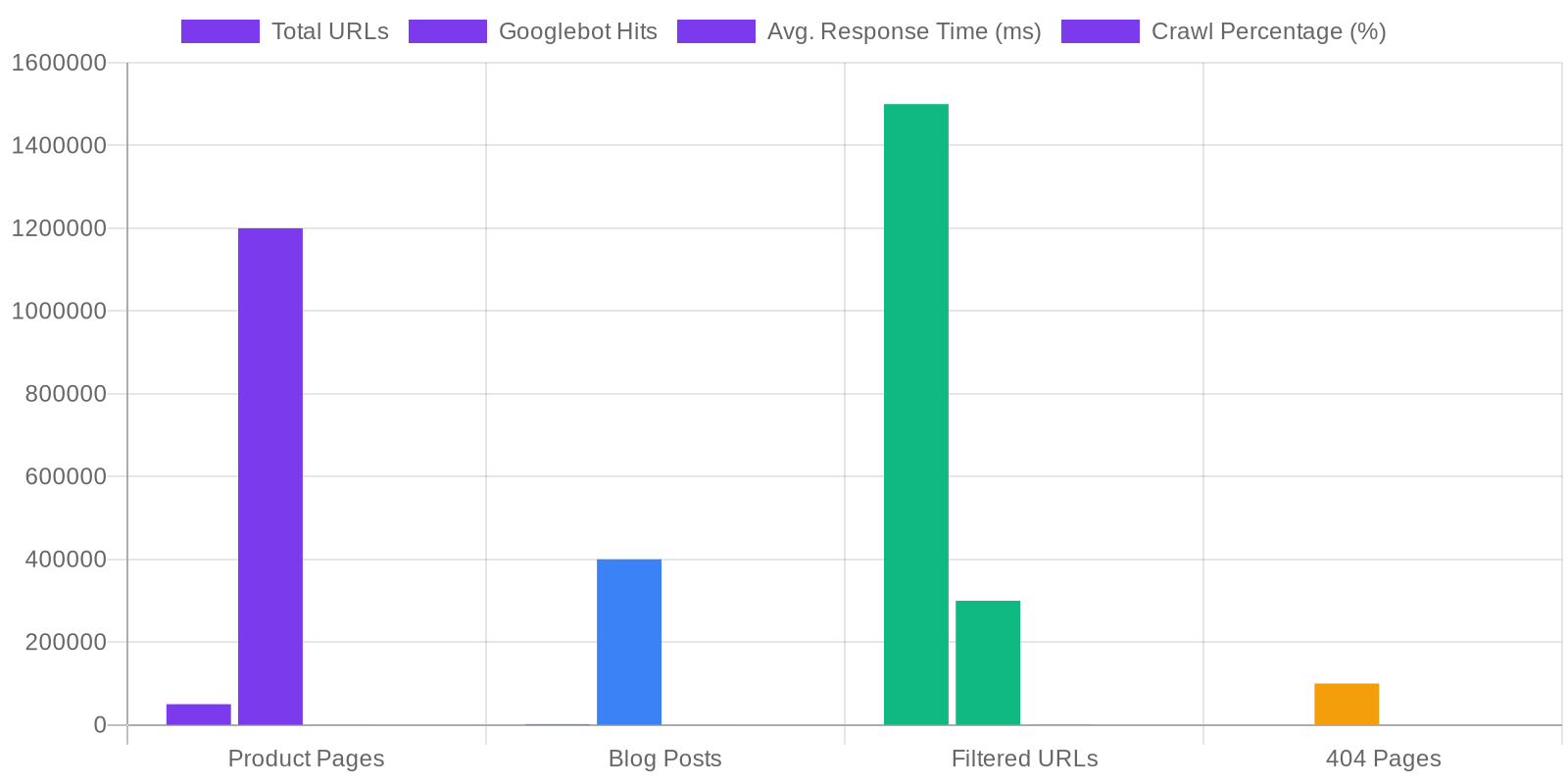

Advanced Analysis: Server Logs, Core Web Vitals, and Automation

To truly master crawl efficiency, you must move beyond standard tools and analyze the raw data of how Googlebot interacts with your site. This is where server log analysis becomes indispensable, providing the ultimate source of truth for your technical SEO efforts.

Unlocking Insights with Server Log Analysis

Server logs record every single request made to your server, including every hit from Googlebot. By analyzing these logs, you can answer critical questions:

- Which sections of my site does Googlebot crawl most frequently?

- Is Googlebot wasting time on redirected or error pages?

- How often are my new product pages being discovered?

- Are there valuable pages that are rarely or never crawled?

This analysis provides a clear roadmap for optimizing crawl budget by revealing exactly where it’s being wasted. A comprehensive SaaS technical SEO audit must include this step.

Correlating Crawl Data with Core Web Vitals

The next level of analysis is to overlay your crawl data with performance metrics. By correlating Googlebot hits with Core Web Vitals data from Google Search Console or a Real User Monitoring (RUM) tool, you can identify if slow page templates are being crawled less frequently. For example, if you discover that pages with a poor Largest Contentful Paint (LCP) receive 30% fewer Googlebot hits, you have a clear, data-backed case for prioritizing performance optimizations to improve crawl efficiency.

Embracing Automation

Manually analyzing logs and monitoring site health is not scalable for large sites. In 2026, leveraging automation is key. Tools can be configured to parse server logs in real-time, automatically flag crawl anomalies, detect new redirect chains, and monitor sitemap health. This proactive approach turns optimizing crawl budget from a periodic task into a continuous, automated process that protects your organic performance.

The Business Impact of Strategic Crawl Budget Optimization

Optimizing crawl budget is not just a technical checkbox; it’s a strategic initiative with a direct impact on your bottom line. By ensuring Googlebot focuses on your most valuable pages, you accelerate the entire SEO flywheel. New features, products, and content get indexed faster, reducing the time-to-value for your marketing efforts. This leads to increased organic traffic, more qualified leads, and a stronger competitive position. Don’t let a bloated site structure and inefficient crawling hold back your growth.

Ready to unlock your site’s true potential? At https://abdurrahmansimsek.com, we specialize in data-driven technical SEO for ambitious SaaS and e-commerce brands. Let’s build a robust strategy for optimizing crawl budget and drive sustainable organic growth.

Conclusion

In 2026, for any large SaaS or e-commerce website, optimizing crawl budget is a fundamental pillar of a successful SEO strategy. It is the mechanism that ensures your best content is seen, indexed, and ranked by search engines. By implementing a proactive workflow that combines strategic `robots.txt` management, clean sitemaps, a robust internal linking structure, and advanced server log analysis, you can guide Googlebot effectively. This not only improves indexation speed but also reduces server load and directly contributes to your business’s growth. Take control of your crawl budget today to secure your organic visibility for tomorrow.

Explore how our expert technical SEO services can help at https://abdurrahmansimsek.com.

Frequently Asked Questions

What is crawl budget and why is optimizing crawl budget critical for large sites?

Crawl budget is the number of URLs Googlebot can and wants to crawl on your site within a given timeframe. For large sites with thousands of pages, optimizing crawl budget is critical to ensure search engines discover your most important content efficiently. This prevents indexation delays for key product or service pages and maximizes your site’s visibility.

What are the most common issues that prevent optimizing crawl budget effectively?

Common issues that waste crawl budget include duplicate content from faceted navigation, broken internal links (404s), redirect chains, and slow server response times. These problems force Googlebot to crawl low-value or non-existent pages, hindering its ability to find your valuable content. Addressing these technical flaws is a core part of any successful SEO strategy.

How do sitemaps and robots.txt contribute to optimizing crawl budget?

Sitemaps and robots.txt are fundamental tools for guiding search engine crawlers. A clean XML sitemap directs Googlebot to your priority pages, while the robots.txt file prevents it from crawling low-value areas. Using both correctly is a foundational step for optimizing crawl budget and directing search engine attention where it matters most.

What is the primary SEO impact of a poorly managed crawl budget?

A poorly managed crawl budget can lead to slow indexation of new content, important pages being missed by Google, and wasted server resources. This directly harms your site’s ability to rank for competitive terms. Proactively optimizing crawl budget ensures your most valuable pages are consistently found and indexed.

How does page speed influence crawl budget optimization?

Page speed, especially server response time, has a direct impact on your crawl rate limit. A faster site allows Googlebot to crawl more pages in the same amount of time, effectively increasing your crawl capacity. Improving site performance is therefore a crucial component of crawl budget optimization.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.