Log File Analysis SEO: Unlocking Googlebot’s Crawl Patterns

Log file analysis SEO provides direct, unfiltered insights into Googlebot’s behavior, crucial for optimizing large websites. This guide details a scalable workflow for log file analysis SEO, revealing crawl patterns, identifying server status codes, and improving crawl efficiency. By examining server access logs, you can pinpoint issues like index bloat and optimize crawl budget, ensuring important content is discovered. Understanding bot activity through log file analysis SEO is fundamental for technical SEO success and maximizing search engine visibility.

AbdurrahmanSimsek.com specializes in advanced SEO strategies, delivering data-driven solutions for complex digital challenges. Our commitment to ethical practices and measurable outcomes ensures clients achieve sustainable growth and superior online performance.

To explore your options, contact us to schedule your consultation.

Understanding how search engines like Google interact with your website is crucial for SEO success. Log file analysis for SEO provides direct insights into Googlebot’s behavior, revealing crawl patterns, identifying issues, and optimizing crawl budget. This guide will walk you through a scalable workflow, especially for large websites, to leverage log data for significant SEO gains. By dissecting server access logs, you can pinpoint exactly what Googlebot is doing, where it’s encountering problems, and how to improve your site’s crawl efficiency and overall indexing in 2026.

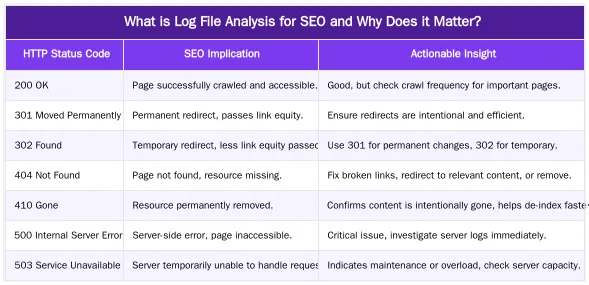

What is Log File Analysis for SEO and Why Does it Matter?

Log file analysis for SEO involves examining server access logs to understand how search engine bots, particularly Googlebot, interact with your website. It provides a direct, unfiltered view of bot activity, revealing which URLs are crawled, when they are accessed, and the server’s response. This direct data is invaluable for identifying technical SEO issues and optimizing crawl budget, especially for large, complex sites.

The Core Benefits: Unveiling Bot Behavior

Log files offer a direct window into Googlebot’s activity, showing precisely which pages are crawled, how often, and any errors encountered. Unlike third-party crawl simulations, log data reflects actual bot behavior. For large websites with thousands or millions of pages, this insight is critical. It helps identify orphaned pages, discover crawl traps, and ensure important content is frequently visited by search engines. Understanding this behavior is fundamental to effective log file analysis for SEO and improving overall site performance.

Decoding Googlebot: Interpreting Server Log Data

Accessing server log files typically involves downloading them from your web hosting provider, CDN, or server directly (e.g., Apache, Nginx). These files contain a chronological record of every request made to your server. Interpreting this raw data is key to effective log file analysis for SEO. You’ll look for specific data points to diagnose crawl issues and understand bot activity.

Key Data Points in Server Access Logs

Common fields in server access logs provide a wealth of information. The IP address identifies the requester, while the timestamp indicates when the request occurred. The request line shows the HTTP method (GET/POST) and the URL accessed. The HTTP status code reveals the server’s response, and the user agent identifies the client (e.g., Googlebot, Bingbot, a browser). The referrer indicates the previous page visited. Analyzing these fields helps paint a clear picture of bot interactions.

Identifying Common Crawl Issues

By analyzing log data, you can quickly spot issues. A high volume of 404s indicates broken internal or external links, wasting crawl budget. Excessive 302 redirects suggest inefficient redirect chains that dilute link equity. Unexpected bot activity on non-existent or low-value pages can signal crawl budget waste. Identifying these patterns through log file analysis for SEO allows for targeted technical fixes.

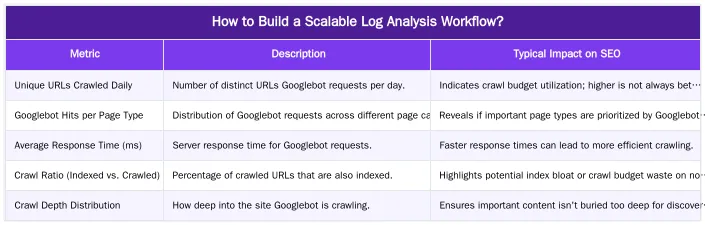

How to Build a Scalable Log Analysis Workflow?

For large websites, processing gigabytes or even terabytes of log data manually is impossible. A scalable workflow is essential for efficient log file analysis for SEO. This involves automated data collection, robust processing, and clear visualization. The goal is to transform raw log entries into actionable insights without overwhelming resources.

Essential Tools for Large-Scale Analysis

Several tools facilitate large-scale log analysis. For smaller to medium sites, the Screaming Frog Log File Analyser is excellent for importing and visualizing log data. However, for enterprise-level data volumes, more robust solutions are needed. The ELK Stack (Elasticsearch, Logstash, Kibana) is a popular choice, offering powerful data ingestion (Logstash), storage and indexing (Elasticsearch), and visualization (Kibana). Custom scripting with Python or R can also be used for tailored processing. The challenge for large sites lies in efficiently parsing, filtering, and storing vast amounts of data before analysis can even begin. For more on optimizing technical aspects, consider a SaaS technical SEO audit in 2026.

Data Collection and Processing for Enterprise Sites

The first step is reliable data collection. This often involves setting up automated processes to transfer log files from web servers (e.g., via SFTP, rsync, or cloud storage integrations) to a central processing system. For high-traffic sites, real-time streaming of logs might be necessary. Once collected, data processing involves parsing the raw text into structured formats, filtering out irrelevant entries (e.g., non-bot traffic, internal IP addresses), and enriching the data (e.g., geo-locating IPs, mapping URLs to page types). This structured data is then ready for analysis, making log file analysis for SEO manageable.

Key Metrics and Data Points for Log File Analysis

Beyond basic status codes, advanced log file analysis for SEO focuses on specific metrics to gauge crawl efficiency and bot behavior. These insights are crucial for optimizing how search engines interact with your content. Our expertise at AbdurrahmanSimsek.com in handling complex SaaS platforms has shown that granular analysis of these metrics can unlock significant performance gains.

Analyzing Crawl Frequency and Depth

Monitoring crawl frequency reveals how often Googlebot visits specific pages. High-priority pages should be crawled frequently. If important content is rarely crawled, it suggests a crawl budget issue. Crawl depth indicates how deep into your site Googlebot ventures. If bots aren’t reaching important content buried deep, it points to internal linking or site structure problems. By cross-referencing log data with site structure, you can identify areas where Googlebot struggles to discover valuable content. This is a core component of effective log file analysis for SEO.

Identifying Crawl Budget Waste and Opportunities

Log files directly expose crawl budget waste. This includes Googlebot repeatedly crawling low-value pages (e.g., faceted navigation filters, old parameter URLs, paginated archives that should be noindexed), encountering numerous 404s, or getting stuck in redirect loops. Conversely, they highlight opportunities: pages that are important but rarely crawled, indicating a need for stronger internal links or sitemap updates. Understanding these patterns is fundamental for optimizing crawl budget. For more insights, refer to Google’s official documentation on large site crawl management.

Advanced Techniques: Identifying Fake Googlebots and Malicious Activity

While log file analysis for SEO primarily focuses on legitimate search engine bots, it’s also a powerful tool for identifying deceptive or malicious activity. Fake Googlebots can consume server resources, skew analytics, and even attempt to scrape content. Recognizing and mitigating these threats is crucial for site security and performance.

How to Spot a Fake Googlebot

The most reliable way to identify a fake Googlebot is through a reverse DNS lookup. A legitimate Googlebot IP address will resolve to a hostname in the googlebot.com or google.com domain. You can then perform a forward DNS lookup on that hostname, which should resolve back to the original IP address. If this two-step verification fails, the bot is not Googlebot, regardless of its user agent string. Many malicious bots spoof the Googlebot user agent to appear legitimate. Implementing this check within your log analysis workflow can filter out significant noise and protect your server. For more technical details on verification, consult Google’s official guide to verifying Googlebot.

Detecting Malicious Bot Activity

Beyond fake Googlebots, log files can reveal other malicious bot activity, such as brute-force attacks, content scraping, or denial-of-service attempts. Look for unusual patterns like:

- High request volumes from a single IP or IP range: This could indicate scraping or an attack.

- Requests for non-existent URLs or administrative paths: Bots probing for vulnerabilities.

- Rapid-fire requests for the same URL: Often a sign of a brute-force attack or aggressive scraping.

- Unusual user agent strings: While not always malicious, they warrant investigation.

Identifying these patterns allows you to block malicious IPs or implement CAPTCHAs, protecting your site and preserving server resources for legitimate users and search engine crawlers. This proactive security is an often-overlooked benefit of comprehensive log file analysis for SEO.

Optimizing Crawl Budget and Indexing with Log File Insights

The ultimate goal of log file analysis for SEO is to translate raw data into actionable strategies that improve your site’s crawl budget and indexing. By understanding Googlebot’s behavior, you can guide it more effectively, ensuring important content is discovered and indexed efficiently.

Improving Crawl Efficiency and Addressing Index Bloat

Log file data is invaluable for optimizing crawl budget. If Googlebot spends significant time on low-value or non-indexable pages (e.g., internal search results, old product variations, duplicate content), you’re wasting valuable crawl budget. Strategies include:

- Noindexing/Disallowing: Use `noindex` tags or `robots.txt` to prevent crawling of irrelevant pages.

- Canonicalization: Consolidate duplicate content to a single URL.

- Internal Linking: Strengthen internal links to important pages, signaling their value.

- Sitemap Optimization: Ensure sitemaps only contain indexable, high-priority URLs.

Addressing index bloat – the presence of too many low-quality or duplicate pages in Google’s index – is also critical. Log data helps identify these pages, allowing you to take corrective action. For a deeper dive into fixing crawl budget issues, visit our guide on how to fix crawl budget issues. Similarly, for broader indexing problems, consult our guide to fixing indexing issues in 2026.

Prioritizing Content for Googlebot

With insights from log file analysis for SEO, you can strategically prioritize content. Pages that are rarely crawled but are commercially important or frequently updated should receive more internal links, be included in XML sitemaps, and potentially be submitted via the Google Indexing API if applicable. Conversely, pages that are over-crawled but offer little SEO value can be de-prioritized. This targeted approach ensures Googlebot’s efforts align with your business objectives, leading to better visibility for your most valuable content.

Integrating Log File Analysis with Broader SEO Strategy

Log file analysis for SEO is not a standalone activity; it’s a powerful component that should integrate seamlessly with your broader SEO strategy. The insights gained from understanding Googlebot’s behavior can inform content creation, internal linking, and overall site architecture. This holistic approach maximizes your SEO efforts.

By identifying pages that Googlebot frequently crawls but rarely indexes, you can pinpoint content quality issues. If Googlebot ignores new, important content, it signals a need for better internal linking or sitemap updates. At AbdurrahmanSimsek.com, we leverage such data to refine content clusters and internal link equity distribution, ensuring that our clients’ most valuable pages receive optimal crawl attention. This strategic integration transforms raw log data into a roadmap for improved organic performance. Ready to transform your SEO with data-driven insights? Explore our services today.

Conclusion

Log file analysis for SEO is an indispensable tool for any serious technical SEO professional, especially for large websites. It offers unparalleled direct insights into Googlebot’s crawling behavior, allowing you to identify inefficiencies, optimize crawl budget, and address critical technical issues. By implementing a scalable workflow, leveraging the right tools, and understanding key metrics, you can transform raw server data into actionable strategies. This leads to improved crawl efficiency, better indexing, and ultimately, enhanced organic visibility for your most important content in 2026. Don’t leave your crawl budget to chance; harness the power of log files. To learn how AbdurrahmanSimsek.com can help you implement a robust log file analysis strategy and elevate your technical SEO, visit our website.

Frequently Asked Questions

What is the primary goal of log file analysis seo?

The main goal of log file analysis seo is to understand exactly how search engine bots, like Googlebot, interact with your website. By analyzing this raw data, you can see which pages are crawled, how often, and identify any crawl errors. This information is crucial for optimizing your site’s technical health and crawl budget.

How does log file analysis seo improve a content strategy?

Log file analysis seo reveals which pages Googlebot crawls most frequently, indicating what it considers important. This insight helps you prioritize content updates for high-value pages and identify important content that is being ignored. You can then improve internal linking from frequently crawled pages to boost the visibility of under-crawled content.

Can log file analysis seo help identify wasted crawl budget?

Absolutely. Log files provide the only definitive data on where Googlebot spends its time on your site. A thorough log file analysis seo process will uncover frequent crawls of low-value URLs, such as pages with parameters or faceted navigation. By identifying this wasted effort, you can block these pages and redirect crawl budget to your most important content.

Is log file analysis necessary for a small website?

While beneficial for any site, it is not always a top priority for smaller websites with fewer than 10,000 pages. However, for large e-commerce, publisher, or enterprise sites, it is an essential technical SEO practice. For these larger sites, managing crawl budget and ensuring efficient indexing is critical for search performance.

What tools are needed for log file analysis?

First, you need access to your server’s raw access logs, which may require contacting your hosting provider. For analysis, you can use specialized SEO tools like Screaming Frog SEO Log File Analyser, Semrush, or JetOctopus. For very large sites, data processing tools like BigQuery or an ELK Stack are often used for a more scalable workflow.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.