Crawl Budget Optimization: Enhancing Medical Site Visibility

Effective crawl budget optimization ensures large medical practices maximize search engine visibility for critical patient-facing content. This article details how to diagnose crawl waste using log file analysis, guiding Googlebot to prioritize high-value YMYL pages like doctor profiles and procedure information. Strategies include managing duplicate content, optimizing faceted navigation, and addressing URL parameters to improve crawl efficiency. By reducing the cost of retrieval, medical websites enhance their E-E-A-T signals and achieve better indexing for semantic authority.

Abdurrahman Şimşek, a Semantic SEO Strategist, provides expert insights into technical SEO challenges specific to large medical domains. This guidance helps medical practices improve their digital footprint by ensuring search engines efficiently discover and rank essential health information.

To explore your options, contact us to schedule your consultation. You can also reach us via: Book a Semantic SEO Audit, Direct WhatsApp Strategy Line: +90 506 206 86 86

For large medical practices, crawl budget optimization ensures patient-facing content is discovered and indexed by search engines. This article defines crawl budget, explains its importance for large medical websites, and outlines entity-driven strategies to optimize how Googlebot crawls your site, ensuring valuable content like doctor profiles and procedure pages achieves visibility.

What is Crawl Budget and Why Does it Matter for Large Medical Practices?

Crawl budget is the number of pages Googlebot will crawl on a website within a given timeframe. For large medical practices with thousands of pages (doctor profiles, procedures, testimonials, blogs), an optimized crawl budget ensures Google discovers and indexes important content instead of wasting resources on low-value or duplicate pages. Inefficient crawling can hinder the visibility of YMYL (Your Money Your Life) content, impacting patient acquisition.

Understanding Googlebot’s Priorities on Medical Domains

Googlebot prioritizes crawling based on perceived value, freshness, and authority, especially for YMYL medical content. For large medical sites, Google does not crawl every page. It focuses on pages that are frequently updated, have strong internal links, and are considered authoritative. Pages deemed less important or technically problematic may be crawled less often or not at all, affecting their ability to rank.

Diagnosing Crawl Waste: Identifying Bottlenecks in Your Medical Site’s Architecture

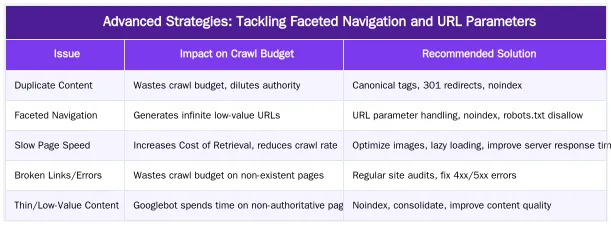

Large medical websites often have technical debt that causes crawl waste. Common issues include duplicate content from printer-friendly versions, session IDs, or syndicated content. Low-value pages like old blog comments, internal search results, or administrative sections consume crawl resources without contributing to visibility. Inefficient site architecture with deep navigation or orphaned pages worsens these problems. Identifying these bottlenecks improves crawl efficiency and reduces the Cost of Retrieval for search engines.

Leveraging Log File Analysis to Uncover Crawl Patterns

Server log files show how Googlebot interacts with your website. Analyzing logs identifies which pages Googlebot visits most frequently, how much time it spends on each, and any crawl errors it encounters. This data pinpoints frequently crawled low-value pages, important pages that are uncrawled or under-crawled, and recurring crawl errors (e.g., 404s, 500s). For more information, explore log file analysis for SEO.

Strategic Crawl Budget Optimization Tactics for Medical Websites

Directives like robots.txt and noindex tags control what Googlebot accesses and indexes. An XML sitemap guides crawlers to important content, while internal linking reinforces topical authority and directs Googlebot. Canonicalization manages duplicate content by defining the authoritative version of a page. A clean, logical URL structure also aids crawling and indexing.

Implementing Robots.txt and Noindex for Precision Control

The `robots.txt` file instructs crawlers which parts of your site to access. Use it to block low-priority sections like internal search results, administrative areas, or staging environments. The `noindex` tag prevents a page from being indexed, even if crawled. This is for pages accessible to users but excluded from search results, like outdated patient forms, thank-you pages, or filtered views with little unique value. The distinction between `robots.txt` (crawl blocking) and `noindex` (index blocking) is key for controlling site visibility.

Optimizing XML Sitemaps and Internal Linking for Crawl Efficiency

An accurate XML sitemap is a roadmap for Googlebot, listing important pages to be indexed. Regularly updating your sitemap ensures new doctor profiles, procedure pages, or research articles are discovered quickly. Internal linking also directs Googlebot. Linking semantically related content guides crawlers to high-value pages, reinforces topical authority, and distributes link equity, improving crawl efficiency and prioritizing medical entities.

Reducing Cost of Retrieval: An E-E-A-T Driven Approach to Crawl Budget

Crawl budget management is reducing the ‘Cost of Retrieval’ (CoR) for search engines. This concept from Abdurrahman Şimşek’s methodology minimizes the resources Googlebot expends to discover, crawl, and understand content. For medical websites, an optimized crawl budget directs Google’s resources to authoritative, expert-reviewed content. This strengthens E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals by helping Google identify and prioritize content from verified medical professionals. Tools like Ruxi Data’s semantic engine aid this process by structuring content in an Entity-Attribute-Value (EAV) model, making it easier for Google to process and attribute expertise.

The Interplay of Site Performance and Crawl Efficiency

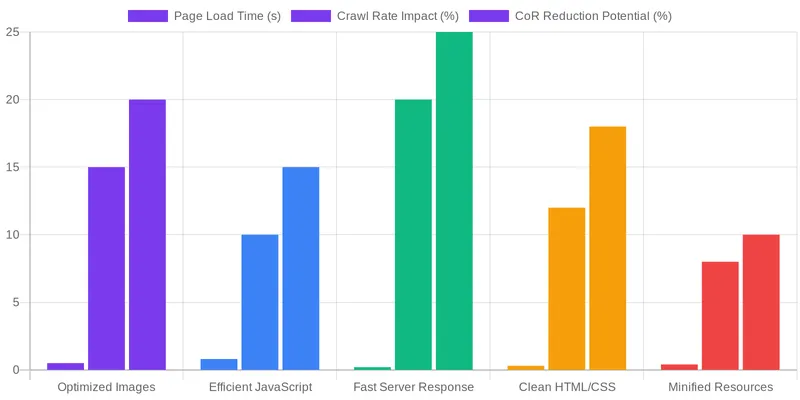

Site performance impacts crawl efficiency and the cost of retrieval. Fast loading times, Core Web Vitals, and a sound technical foundation reduce the computational cost for Googlebot to retrieve and process pages. When pages are fast and technically sound, Googlebot can crawl more pages in less time, freeing up crawl budget. Slow pages or those with technical issues can reduce Googlebot’s crawl rate, impacting discovery and indexing. Optimizing performance is foundational to any crawl strategy.

Partner with an Expert to Master Your Medical Website’s Crawl Budget

Managing crawl budget for a large medical practice requires expertise. Abdurrahman Şimşek, a London-based Semantic SEO Strategist, specializes in medical SEO, semantic engineering, and ‘Cost of Retrieval’ optimization. His understanding of the London private healthcare market and experience with complex medical websites helps clinics dominate local organic search and attract patients. An expert ensures your technical foundation supports patient acquisition goals.

Book Your Semantic SEO Audit Today

Book a Semantic SEO Audit with Abdurrahman Şimşek to assess your crawl budget efficiency and develop a tailored optimization strategy. For direct inquiries, use the Direct WhatsApp Strategy Line: +90 506 206 86 86.

Conclusion

Crawl budget management is a critical aspect of SEO for large medical practices. Guiding Googlebot to valuable content and minimizing crawl waste enhances indexing, strengthens E-E-A-T signals, and builds topical authority. This ensures your medical website remains discoverable and competitive. For support optimizing your site’s technical foundation and visibility, partner with a specialist in semantic SEO and web development. Visit abdurrahmansimsek.com to learn more, Book a Semantic SEO Audit, or connect via Direct WhatsApp Strategy Line: +90 506 206 86 86.

Frequently Asked Questions

What is crawl budget in the context of a large medical practice website?

Crawl budget refers to the number of pages Googlebot is willing and able to crawl on your website within a specific period. For large medical practices with extensive content like doctor profiles, service pages, and patient resources, effective crawl budget optimization is crucial. It ensures Google prioritizes discovering and indexing your most valuable, patient-facing content, rather than wasting resources on less important or duplicate pages.

What is the single biggest waste of crawl budget for clinic websites?

The most significant drain on crawl resources for medical clinic websites often comes from URL parameters. These are typically generated by internal search filters, booking systems, or patient portals, creating numerous low-value or duplicate URLs. Googlebot then spends valuable time crawling these redundant pages instead of your core service or doctor profile content. Addressing these issues is crucial for effective crawl management.

How can I tell if I have a crawl budget problem, and why is crawl budget optimization important?

A primary indicator of a crawl budget issue is a significant delay in the indexing of new or updated important pages. If your new service offerings or doctor profiles take weeks to appear in Google’s index, it suggests Googlebot is overspending resources elsewhere. This highlights the need for strategic crawl budget management to ensure your critical content gains visibility quickly.

Is using ‘nofollow’ on internal links an effective strategy for crawl budget optimization?

No, using ‘nofollow’ on internal links is generally an outdated and ineffective method for managing crawl resources. While it might prevent some PageRank flow, Google may still choose to crawl nofollowed links. Proper crawl budget optimization relies on more direct methods, such as using the ‘robots.txt’ file to block entire sections from crawling or implementing ‘noindex’ tags for specific pages you wish to exclude from the index.

How does site speed impact crawl budget, and how does it relate to crawl budget optimization?

Site speed directly influences your crawl capacity. A faster server response time and quicker page load speeds enable Googlebot to process and crawl a greater number of pages within the same timeframe. Conversely, a slow website actively reduces the efficiency of Google’s crawling efforts. Improving site speed is a fundamental aspect of effective crawl budget optimization, allowing more of your important content to be discovered.

How can a large medical practice get expert help with their crawl budget optimization strategy?

Large medical practices can partner with a specialist like Abdurrahman Şimşek, a Semantic SEO Strategist. He offers specialized semantic SEO audits to refine your crawl budget strategy, focusing on entity architecture and reducing the cost of retrieval. You can book a Semantic SEO Audit via his website or use the Direct WhatsApp Strategy Line: +90 506 206 86 86 for immediate consultation.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.