Cost of Retrieval SEO: Optimizing Googlebot’s Efficiency

The cost of retrieval seo (CoR) quantifies the computational resources Googlebot expends to crawl, render, and understand a webpage, directly impacting search visibility. A high CoR, driven by factors like slow page speed, complex JavaScript rendering, and bloated DOM trees, depletes crawl budget and delays indexing. This article explains how optimizing website architecture, semantic HTML, and Core Web Vitals reduces retrieval cost, making sites more efficient for Googlebot’s information retrieval process. Understanding CoR is crucial for enhancing technical SEO performance and ensuring comprehensive content indexing.

Abdurrahman Şimşek, a Semantic SEO Strategist, provides expert insights into mitigating high retrieval costs. This content offers actionable strategies for improving website efficiency, ensuring optimal resource allocation by search engines, and strengthening overall technical SEO foundations.

To explore your options, contact us to schedule your consultation.

The cost of retrieval seo (CoR) is a critical technical SEO metric often unnoticed by web developers. Optimizing CoR makes a site digestible for Googlebot, directly impacting search visibility and authority.

What is Cost of Retrieval (CoR) in SEO, and Why Does it Matter?

Cost of Retrieval (CoR) quantifies the computational resources Googlebot expends to crawl, render, and understand a webpage. It includes the time and processing power to execute JavaScript and parse HTML. A lower CoR indicates an efficient website, making it less resource-intensive for Google to process, which influences indexing and ranking.

The Hidden Tax on Your Website’s Visibility

A high CoR acts as a hidden tax on visibility. If a site demands excessive computational effort, it can deplete its allocated crawl budget from Google’s finite resources. This leads to fewer pages being crawled, delayed indexing, and a slower understanding of the site’s topical authority. For large or frequently updated websites, a high retrieval cost can hinder search performance and organic reach.

How Google Calculates CoR: Deconstructing the Technical Factors

Page Speed, Rendering, and DOM Size: The Core Culprits

Slow loading times increase the resources Googlebot expends. Complex JavaScript rendering adds computational overhead. A bloated Document Object Model (DOM) tree forces Googlebot to process more data. Core Web Vitals (Largest Contentful Paint, Cumulative Layout Shift, First Input Delay) reflect rendering complexity for bots. Reducing DOM size and optimizing rendering minimizes retrieval cost. For managing crawl resources, explore our guide on crawl budget optimization workflow.

Website Architecture and Crawl Depth: Guiding Googlebot Efficiently

A convoluted or shallow website architecture makes it difficult for Googlebot to discover and prioritize pages. If content is buried deep or poorly linked, Googlebot expends more effort navigating it. A logical, hierarchical site structure with clear internal linking guides Googlebot, ensuring pages are discovered with minimal computational effort. This approach is fundamental to crawl budget optimization.

Beyond Speed: The Semantic Dimension of Cost of Retrieval

Retrieval cost includes semantic clarity, not just technical speed. The easier it is for Google to comprehend content’s meaning and context, the lower its “understanding” cost, leading to more efficient information retrieval.

Semantic HTML5 and Structured Data: Clarity for Googlebot

Semantic HTML5 uses tags like “, `

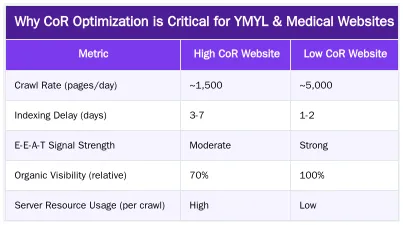

Why CoR Optimization is Critical for YMYL & Medical Websites

For Your Money Your Life (YMYL) sectors, such as medical clinics and plastic surgery practices, retrieval cost optimization is critical. Google demands the highest E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) standards from these industries. An efficient, semantically clear website supports these signals.

E-E-A-T and Trust: The Semantic Connection

A low retrieval cost contributes to E-E-A-T by signaling an authoritative and trustworthy website. When Googlebot can quickly and accurately process content, it reinforces the perception of a reliable source. In sensitive niches like healthcare, Google prioritizes efficient, clear, and unambiguous sources. Websites that are difficult to crawl or semantically ambiguous may struggle to establish trust signals. Google’s Quality Rater Guidelines emphasize high-quality, trustworthy content, which is easier for search engines to retrieve and understand.

Ruxi Data: Automating CoR Reduction for High-Stakes Domains

Infrastructure like Ruxi Data addresses the challenges of high-stakes domains. By automating topical map creation and Entity-Attribute-Value (EAV) modeling, Ruxi Data contributes to a lower retrieval cost. This system ensures content for medical clinics and plastic surgeons is technically optimized and semantically clear for Googlebot. This approach strengthens topical authority, a critical factor for ranking in competitive YMYL markets.

Actionable Strategies to Reduce Your Website’s Cost of Retrieval

Technical Audits and Performance Optimization

Regular technical SEO audits identify and rectify issues that increase retrieval cost. Key actions include optimizing images, minifying CSS and JavaScript, leveraging browser caching, and improving server response times. Tools like Google PageSpeed Insights can pinpoint performance bottlenecks. Addressing these technical aspects reduces the time and resources Googlebot spends fetching and rendering pages.

Streamlining Content Architecture and Internal Linking

A logical, shallow site structure makes important pages accessible within a few clicks from the homepage. Optimizing internal linking for crawlability and semantic flow helps Googlebot understand content relationships. Consolidating duplicate content or using canonical tags prevents Google from wasting resources on redundant pages. A descriptive URL structure aids content understanding and processing. For guidance on resolving crawl issues, refer to our article on how to fix crawl budget issues.

The ROI of CoR Optimization: What to Expect from an Elite SEO Strategist

Improved Rankings, Faster Indexing, and Enhanced Authority

A lower retrieval cost improves organic visibility. Efficient crawling, rendering, and understanding by Googlebot leads to faster indexing of new pages and updates. This efficiency signals an authoritative site, contributing to stronger rankings. This perception of expertise and trustworthiness drives more qualified organic traffic.

Future-Proofing Your Digital Presence

Optimizing retrieval cost aligns with Google’s algorithms, which prioritize semantic understanding and user experience. As generative AI search grows, the clarity and efficiency of information retrieval becomes paramount. A semantically and technically optimized website is better prepared for these shifts, ensuring long-term resilience. Google emphasizes efficient web experiences, as highlighted in Google Search Central blog posts.

Ready to Optimize Your Website’s Cost of Retrieval? Partner with an Expert

Optimizing retrieval cost requires expertise in technical SEO and semantic engineering. For medical clinics, plastic surgeons, and aesthetic practices in London, ensuring a website is discoverable and understood by Googlebot is a business imperative. Abdurrahman Şimşek, a London-based Semantic SEO Strategist, offers the specialized knowledge and Ruxi Data infrastructure to minimize your website’s retrieval cost, build robust topical authority, and secure your dominance in local organic search.

Conclusion

The Cost of Retrieval (CoR) is a foundational yet frequently neglected metric in technical SEO. Understanding its components—technical speed, rendering complexity, semantic clarity, and entity architecture—improves search visibility. Optimizing retrieval cost ensures Googlebot can process content efficiently, leading to better crawl budget allocation, faster indexing, and stronger E-E-A-T signals, which is vital for YMYL sectors. Reducing your website’s cost of retrieval seo is an investment in its long-term organic performance and authority. To discuss a tailored strategy for your website, contact Abdurrahman Şimşek today.

Frequently Asked Questions

What is the cost of retrieval seo (CoR) and why is it important for my website?

The cost of retrieval seo (CoR) quantifies the computational resources Googlebot expends to crawl, render, and understand your webpage. A lower CoR signifies an efficient website, making it less resource-intensive for Google to process and potentially improving your search visibility. This metric is crucial for technical SEO success.

How does the cost of retrieval seo specifically impact YMYL and medical websites?

For YMYL (Your Money Your Life) and medical websites, a high cost of retrieval seo can be particularly detrimental. These sites often have extensive content, requiring frequent updates and comprehensive crawling. If Googlebot struggles to process your pages efficiently, critical information might be indexed slowly, impacting trust and authority in sensitive health topics.

What technical issues contribute to a high Cost of Retrieval for SEO?

Several technical factors can inflate your site’s Cost of Retrieval, making it harder for search engines to process. These include bloated code, unoptimized large images, overly complex DOM structures, and excessive JavaScript. An inefficient internal linking strategy also forces Googlebot to expend more resources to understand your site’s architecture.

Can a Semantic Content Network improve my site’s cost of retrieval seo?

Yes, a well-implemented Semantic Content Network significantly reduces the cost of retrieval for SEO. By creating a highly organized structure with logical internal linking, it provides clear pathways for Googlebot. This helps search engines efficiently understand the relationships between your content, streamlining the crawling and indexing process.

Is Cost of Retrieval the same as user-facing page speed metrics?

While related, Cost of Retrieval and page speed are distinct. Page speed, often measured by Core Web Vitals, focuses on user experience, whereas CoR is a search engine-centric metric. Optimizing for a low CoR by cleaning up code and improving efficiency will typically lead to better page speed scores as a positive side effect.

How can I get expert help to optimize my website’s Cost of Retrieval?

Optimizing your site’s Cost of Retrieval requires a deep understanding of technical SEO and semantic content strategies. As a Semantic SEO Strategist specializing in YMYL domains like London Private Healthcare, Abdurrahman Şimşek can audit your site, identify CoR issues, and implement effective solutions to enhance your search visibility and authority. Contact us for a consultation.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.