Automated Link Equity: Optimizing Internal Linking for Authority

Implementing automated link equity distribution streamlines internal linking, ensuring optimal PageRank flow and robust topical authority across large, complex websites. This guide details a scalable workflow for programmatic internal linking, focusing on enhancing crawlability, user experience, and overall search engine performance. Readers will learn to leverage advanced tools and semantic principles to manage link silos, optimize anchor text, and address orphan pages, ultimately improving content discoverability and site structure.

Abdurrahman Şimşek, a Holistic SEO Strategist, presents a comprehensive approach to internal linking automation. This content provides actionable strategies for PageRank sculpting, crawl depth management, and integrating SEO data pipelines to build a strong, semantically rich site architecture.

To explore your options, contact us to schedule your consultation.

Implementing automated link equity distribution is transforming how large websites manage their internal linking, ensuring optimal PageRank flow and robust topical authority. This guide explores a scalable workflow for automating internal linking, focusing on its strategic importance for complex sites, particularly within the competitive medical and aesthetic surgery sectors. Discover how leveraging advanced tools and semantic principles can enhance your site’s crawlability, user experience, and overall search engine performance in 2026.

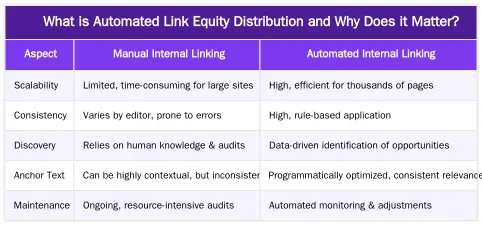

What is Automated Link Equity Distribution and Why Does it Matter?

Automated link equity distribution involves using programmatic methods to analyze, identify, and implement internal links across a website, ensuring that authority and relevance signals are optimally passed between pages. This process is crucial for large websites where manual internal linking becomes impractical, allowing for efficient management of PageRank flow, improved crawlability, and enhanced topical authority.

Link equity represents the value or authority that a hyperlink passes from one page to another. Historically, this concept was closely tied to Google’s PageRank algorithm, which quantified the importance of a page based on the quantity and quality of links pointing to it. While the direct manipulation of “PageRank sculpting” through nofollow attributes is largely outdated, the underlying principle of distributing authority and relevance through internal links remains fundamental to SEO. Modern internal linking strategies focus on creating a logical, semantically rich site structure that guides both users and search engine crawlers, ensuring important content receives adequate internal authority and is easily discoverable. This strategic approach moves beyond simple link counts to emphasize contextual relevance and user experience.

Understanding Link Equity and Its Evolution

Link equity, often referred to as ‘link juice,’ is the measure of authority and value passed through hyperlinks. In the early days of SEO, the concept of PageRank, developed by Google founders Larry Page and Sergey Brin, was central to understanding how authority flowed across the web. Websites attempted “PageRank sculpting” by strategically using nofollow tags to concentrate authority on specific pages. However, Google clarified that nofollowed links still consume PageRank, effectively diluting its impact rather than redirecting it. Today, the focus has shifted from rigid PageRank sculpting to a more holistic approach. Effective internal linking now prioritizes creating a clear site architecture, enhancing user navigation, and reinforcing topical relevance. This ensures that valuable content is discoverable and that authority is distributed naturally, signaling to search engines the importance and relationships between pages. Wikipedia provides a comprehensive overview of PageRank’s history and mechanics.

The Mechanics of Link Equity: PageRank, Crawl Depth, and Semantic Relevance

Internal links are the connective tissue of a website, directly influencing how search engines perceive its structure and content hierarchy. Each internal link acts as a vote of confidence, signaling to crawlers that the linked page is relevant and important. This mechanism directly impacts PageRank flow, distributing authority from stronger pages to weaker, yet critical, ones. A well-structured internal linking profile ensures that search engine bots can efficiently crawl and index all relevant content, preventing important pages from becoming isolated. Furthermore, internal links dictate crawl depth, which is the number of clicks required to reach a page from the homepage. Pages buried deep within a site hierarchy may receive less crawl budget and authority, potentially hindering their indexation and ranking potential. The problem of orphan pages, which are discoverable only via sitemaps or external links, severely impedes link equity distribution and crawl efficiency.

Optimizing Crawl Depth and Eliminating Orphan Pages

Crawl depth refers to how many clicks it takes for a search engine crawler to reach a specific page from the homepage. A shallow crawl depth, typically 2-3 clicks, is ideal for important pages, ensuring they are frequently crawled and indexed. Pages located deeper in the site structure may be crawled less often, impacting their visibility. Identifying and eliminating orphan pages is a critical step in optimizing crawl depth and ensuring comprehensive indexation. Orphan pages are those not linked internally from any other page on the website, making them difficult for search engines to discover and pass authority to. Tools like Screaming Frog can identify these pages by comparing crawled URLs against sitemap data and Google Search Console insights. A systematic approach to fixing orphan pages involves integrating them into the main site navigation or contextual content, ensuring they receive appropriate internal links and contribute to the overall site authority.

The Power of Semantic Relationships in Internal Linking

Semantic relevance in internal linking goes beyond simply connecting related keywords; it involves establishing clear topical relationships between content pieces. When links connect pages that are semantically related, search engines gain a deeper understanding of the website’s topical authority and expertise. This is particularly important for complex content clusters, where a central “pillar page” might link to numerous supporting “cluster pages.” This structure, often referred to as link silos or content hubs, reinforces the website’s authority on a specific subject. By using descriptive and contextually relevant anchor text, internal links can signal the precise relationship between pages, enhancing both user experience and search engine comprehension. This strategic approach helps build a robust semantic content network, which is vital for establishing dominant topical authority in competitive niches.

Building Your Automated Internal Linking Workflow: Tools & Strategies

Establishing an automated internal linking workflow involves a systematic approach to data collection, analysis, and implementation. This process begins with gathering comprehensive data on your existing site structure, content, and performance. Leveraging SEO data pipelines allows for efficient identification of linking opportunities and areas for improvement. Once data is collected, programmatic analysis can pinpoint orphan pages, identify content clusters, and suggest optimal link placements and anchor text. The implementation phase can then involve either automated insertion of links or the generation of actionable recommendations for manual review, balancing efficiency with strategic oversight.

Leveraging SEO Data Pipelines for Link Opportunity Discovery

An effective automated internal linking strategy relies on robust data. Tools like the Google Search Console API provide invaluable insights into crawl statistics, indexation status, and existing internal links. By extracting this data, you can identify pages that are under-linked, over-linked, or not linked at all. Screaming Frog is another essential tool for crawling your website and generating detailed reports on internal links, anchor text, and crawl depth. Combining data from these sources with Python for SEO scripts allows for powerful analysis. Python can process large datasets to identify content gaps, discover semantically related pages, and pinpoint orphan pages. This data-driven approach enables the programmatic identification of optimal linking opportunities, ensuring that every internal link serves a strategic purpose in distributing authority and relevance.

Automating Anchor Text and Link Placement

Automating anchor text optimization involves using natural language processing (NLP) to analyze content and suggest relevant, descriptive anchor texts. This moves beyond generic phrases like “click here” to contextually rich terms that accurately reflect the linked page’s content. Related keywords and Latent Semantic Indexing (LSI) terms can be programmatically identified from the target page’s content to create diverse and semantically relevant anchor texts. Intelligent link placement can also be automated by identifying specific paragraphs or sections within source content that are topically aligned with potential target pages. While automation offers significant scalability, a balance with manual review is crucial. Human oversight ensures that automated links are natural, provide genuine user value, and avoid over-optimization penalties. This hybrid approach allows for efficient scaling while maintaining quality and strategic intent. For a deeper dive into practical implementation, explore our automated internal linking workflow guide.

Beyond Automation: Holistic SEO & Ruxi Data for YMYL Link Equity

While basic automation streamlines internal linking, a truly effective strategy, especially for Your Money Your Life (YMYL) domains like medical clinics and plastic surgeons, demands a Holistic SEO approach. This integrates semantic engineering and specialized infrastructure to build a robust, authoritative content network. For London-based medical and aesthetic clinics, the stakes are higher due to stringent E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) requirements. Abdurrahman Şimşek’s methodology, leveraging Ruxi Data, transforms clinical expertise into structured, semantically linked content. This not only optimizes the distribution of authority but also minimizes the Search Engine Cost of Retrieval (CoR), making complex medical information more accessible and understandable for search engines.

Ruxi Data’s Role in Semantic Link Equity for Healthcare

Ruxi Data’s LLM-driven semantic engine is designed to address the unique challenges of YMYL content. It automates the creation of detailed topical maps and Entity-Attribute-Value (EAV) modeling, identifying intricate semantic relationships that human analysis might overlook. For medical content, this means accurately linking conditions to treatments, procedures to specialists, and symptoms to diagnoses, building a highly relevant and interconnected knowledge base. This advanced semantic linking ensures that internal links are not just present but are contextually precise, reinforcing E-E-A-T signals for search engines. By structuring content around entities and their relationships, Ruxi Data helps medical clinics and plastic surgeons in London establish themselves as definitive authorities in their respective fields, crucial for ranking in competitive healthcare searches.

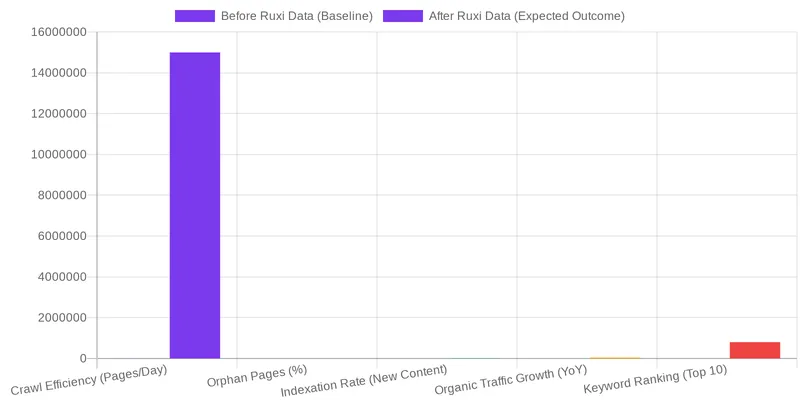

Minimizing Search Engine Cost of Retrieval (CoR) for YMYL Domains

The Search Engine Cost of Retrieval (CoR) refers to the computational resources search engines expend to crawl, process, and understand a website’s content. For complex YMYL domains, a high CoR can hinder indexation and ranking. An optimized, semantically rich internal linking structure, powered by Ruxi Data, significantly reduces this cost. By clearly defining content relationships and distributing authority efficiently, Ruxi Data enables search engines to quickly grasp the site’s topical depth and relevance. This efficiency translates into better crawl rates, faster indexation of new content, and improved visibility for critical medical procedures and services. For London-based medical and aesthetic clinics, this means their specialized expertise is more readily recognized and rewarded by search engines, leading to stronger E-E-A-T signals and ultimately, higher rankings.

Frequently Asked Questions

What is automated link equity and why is it important?

Automated link equity distribution is the process of using software to programmatically create internal links across a website. This ensures that authority (PageRank) flows logically from high-value pages to other relevant content, improving site structure and topical relevance. The goal is to strengthen content clusters and enhance search engine crawlability without manual intervention.

How does automated link equity impact new content?

A system for automated link equity ensures new content is immediately integrated into your site’s architecture. When you publish a new page, the workflow automatically identifies relevant existing pages to link from, helping the new content get crawled faster and inherit authority. This process accelerates indexing and performance for new articles.

Is it safe to implement automated link equity for a large website?

Yes, when executed properly, implementing automated link equity is a safe and highly effective strategy for large sites. A data-driven system bases its linking decisions on semantic relevance and user-defined rules, not random placement. This ensures all links are contextually appropriate and strategically support your topical authority goals.

How does an automated system choose the best anchor text?

An automated internal linking system analyzes the content of both the source and target pages to determine the most semantically relevant anchor text. It identifies primary and secondary keywords on the target page and finds natural phrases within the source page’s content to use as anchors. This avoids generic text like “click here” and sends strong contextual signals to search engines.

Can this type of automation fix existing site structure issues?

Yes, a comprehensive workflow for automated link equity can resolve existing structural problems. The process typically begins with a full site audit to find orphan pages and inefficient authority distribution. The system then builds new, logical links to connect isolated content and strengthen your topic clusters.

What is the difference between a topic cluster and a link silo?

A topic cluster is a content strategy where a central “pillar” page is supported by multiple, related “cluster” articles. A link silo is the technical SEO practice of using a specific internal linking structure to connect these pages. This structure channels authority from the cluster articles up to the main pillar page, reinforcing its importance.

Ruxi Data brings together multi-model AI, automated website crawling, live indexation checks, topical authority mapping, E-E-A-T enrichment, schema generation, and full pipeline automation — from crawl to WordPress publish to social posting — all in one platform built for agencies and freelancers who run on results.